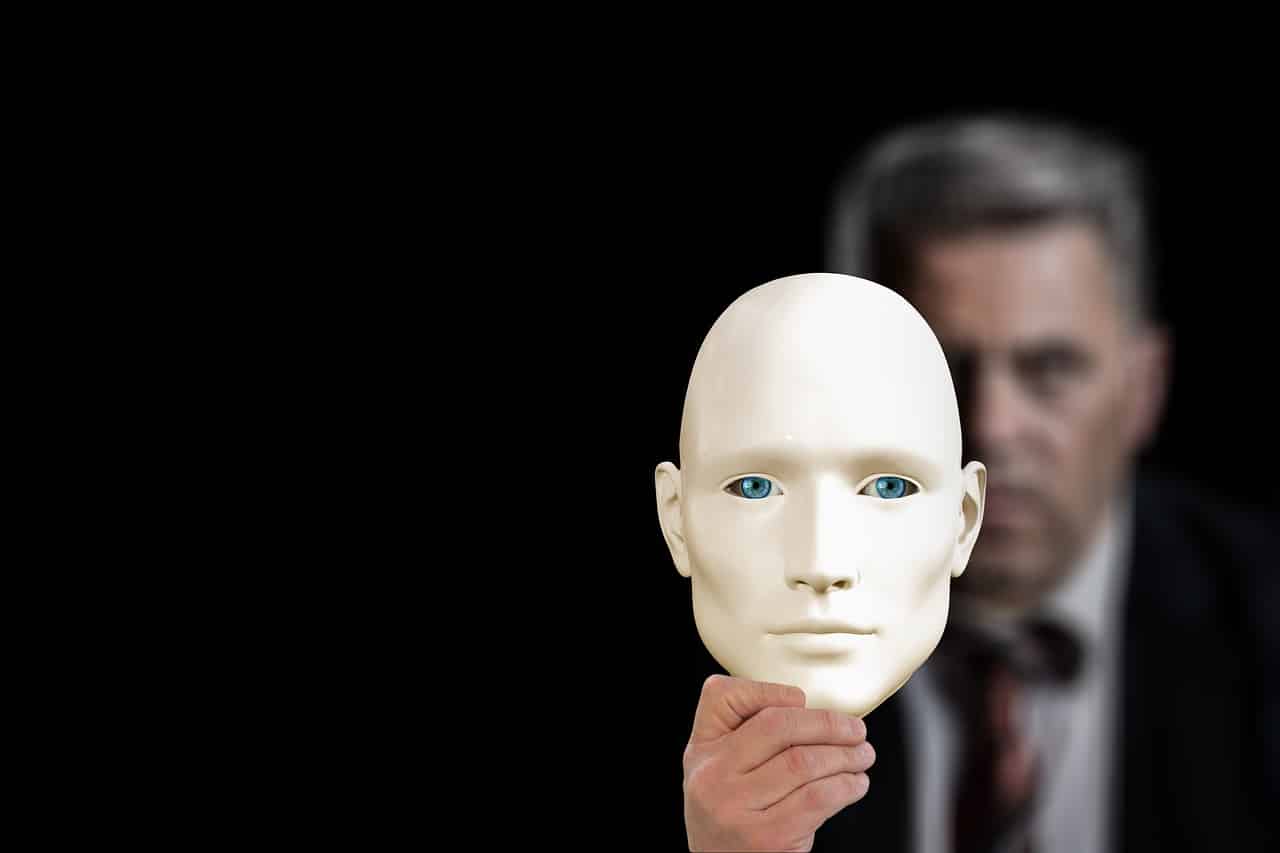

Michael Saylor Alerts Bitcoin Community Amid Rising Tide of Deepfake Scams

MicroStrategy founder and tech titan Michael Saylor has issued a warning about the influx of AI-generated deepfake scams in the Bitcoin community.

The alarm follows several reports last week flagging fake AI-generated videos of Saylor reportedly promising to “double people’s money instantly.” The fake ad prompted customers to scan a QR code to send Bitcoin (BTC) to the perpetrator’s address.

One user posted on X (formerly Twitter), that Michael Saylor’s deepfake video popped up on YouTube (again).

In response, Saylor wrote on Sunday, that “there is no risk-free way to double your Bitcoin.”

“MicroStrategy doesn’t give away BTC to those who scan a barcode,” he stressed.

⚠️ Warning ⚠️ There is no risk-free way to double your #bitcoin, and @MicroStrategy doesn't give away $BTC to those who scan a barcode. My team takes down about 80 fake AI-generated @YouTube videos every day, but the scammers keep launching more. Don't trust, verify. pic.twitter.com/gqZkQW02Ji

— Michael Saylor⚡️ (@saylor) January 13, 2024

Further, he revealed that his security team takes down 80 deepfake videos per day, on average, that depicts Saylor’s fake crypto promises.

“My team takes down about 80 fake AI-generated YouTube videos every day, but the scammers keep launching more. Don’t trust, verify.”

Saylor’s scam videos followed a suite of convincing-looking deepfake video trend in the recent past. In November 2023, fake videos of Ripple and its chief Brad Garlinhouse surfaced with fake XRP giveaways. Similarly, Cardano co-founder Charles Hoskinson fell victim to deepfake in December, which followed an immediate warning from him on the increasing sophistication of these fake videos.

As predicted, Generative AI scams are now here. These will be dramatically better in 12-24 months and hard for anyone to distinguish between reality and the AI fiction https://t.co/u7uaIEUodt

— Charles Hoskinson (@IOHK_Charles) December 15, 2023

Combating Crypto Deepfakes

The rapidly evolving AI technology masks a grim reality – security and privacy concerns. A UCL report said that experts have ranked manipulated video/audio as the most worrying use of artificial intelligence in terms of its applications for crime.

Matt Groh, a research assistant with the Affective Computing Group at the MIT Media Lab, said that people can defend themselves against falling victim to deepfakes, by using their own intellect. “You have to be a little skeptical, you have to double-check and be thoughtful,” Groh said.